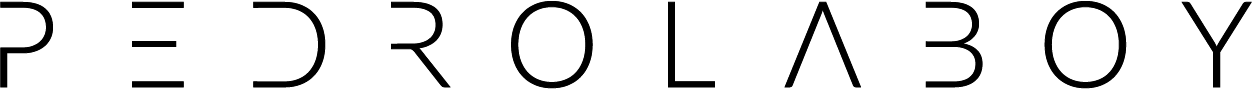

The transformation in retail that started in 2020 is not about new trends. Rather, it is defined by mass adoption of services that were previously offered by only selected retailers. The new purchase journey now takes four distinct paths: 1) drive and purchase – travel to a brick-and-mortar store; 2) click and pick – purchased online and pickup at the store; 3) click and deliver – purchase online and have the item delivered; and 4) subscribe and forget – subscribe online and have the item delivered a regular interval (CPG brands). Consumers have adapted to these services and clearly see trade-off between time/effort and money. The retailers that will come out on top will be those that can offer the most frictionless purchase journeys.

Notebook Thoughts: Using The AI to Transform Talent Acquisition

Every HR department and talent acquisition agency knows that in today’s tight labor market it is extremely difficult to find the talent that meets the organization’s business needs. In other words, the way we go about matching talent to job openings is still pretty much in the dark ages. For employers, the process is slow, expensive and labor intensive. For applicants, it is often difficult to find open positions that are a fit.

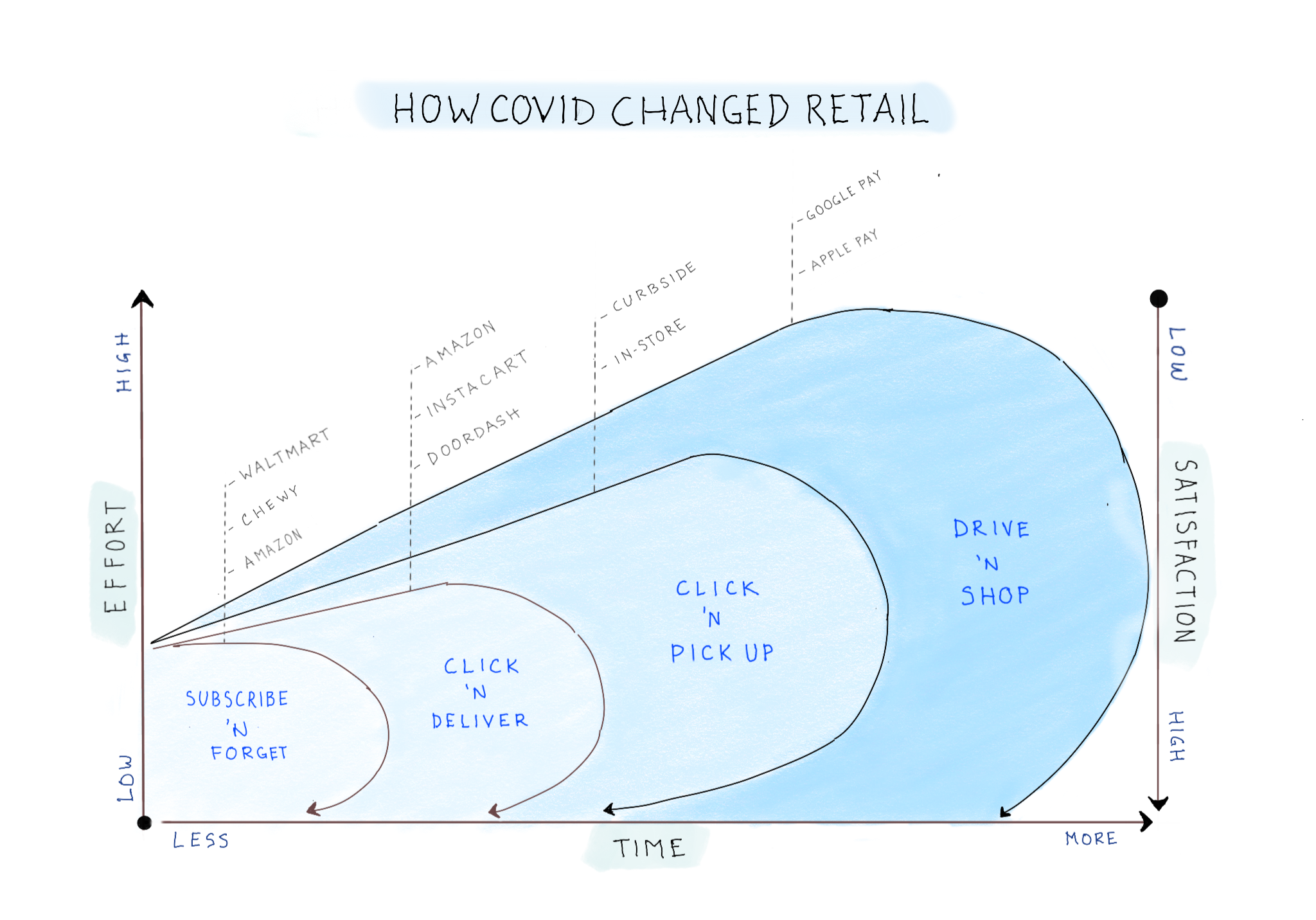

However, today we can leverage artificial intelligence (AI) and machine learning (ML) to transform the way we match talent to employers. An AI/ML solution could be built using multiple technologies but using the Google or Amazon Cloud offers cost savings, scalability and speed that few can match.

The above is a sketched architecture of what the platform could look like using the Google Cloud. I will call this solution Deep Signal.

Sample Use Cases

So, what could you do with Deep Signal? Here are a small sample of use cases:

1. Candidate Sourcing and Placement – Source candidates faster and more accurately (decrease the time from first contact to placement)

2. Source Specialty Candidates – Identify difficult to find candidates with specialized skills

3. Candidate Recommendation Engine – Recommend candidates based on look-a-like modeling (if you liked this resume, you will also like these other resumes)

4. Employer recommendations Engine – Recommend employers based on look-a-like modeling (if you are interested in this employer, you will also like these other employers)

5. Smart Search – Job search based on non-traditional features (commute time, vacation, etc.)

6. Next Best Action – Make recommendations based on actions taken by employer or candidate

7. Identify Prospective Clients – Identify prospective clients based on existing candidate database (this prospective employer will be interested in these candidates)

Technical Requirements

There are two things that will be needed in order to build a AI driven platform. The first one is data. Without extensive categorical and historical data, you cannot build accurate machine learning models. The second thing we will need is computing power. Without enough computing power, it could take weeks or months to run our models. Both Amazon and Google offer solutions with more than enough computing power to handle anything you throw at them.

Final Thought

Transforming into a data-driven business model based on machine learning and artificial intelligence requires more than deploying new technologies. It often requires cultural and organizational shifts as the business adapts to the realignment of data, people, process and technology.

As always, questions and thoughts are welcome. If you give me a use case and I will think of ways ML/AI could be used.

Notebook Thoughts: Machine Learning for Dummies

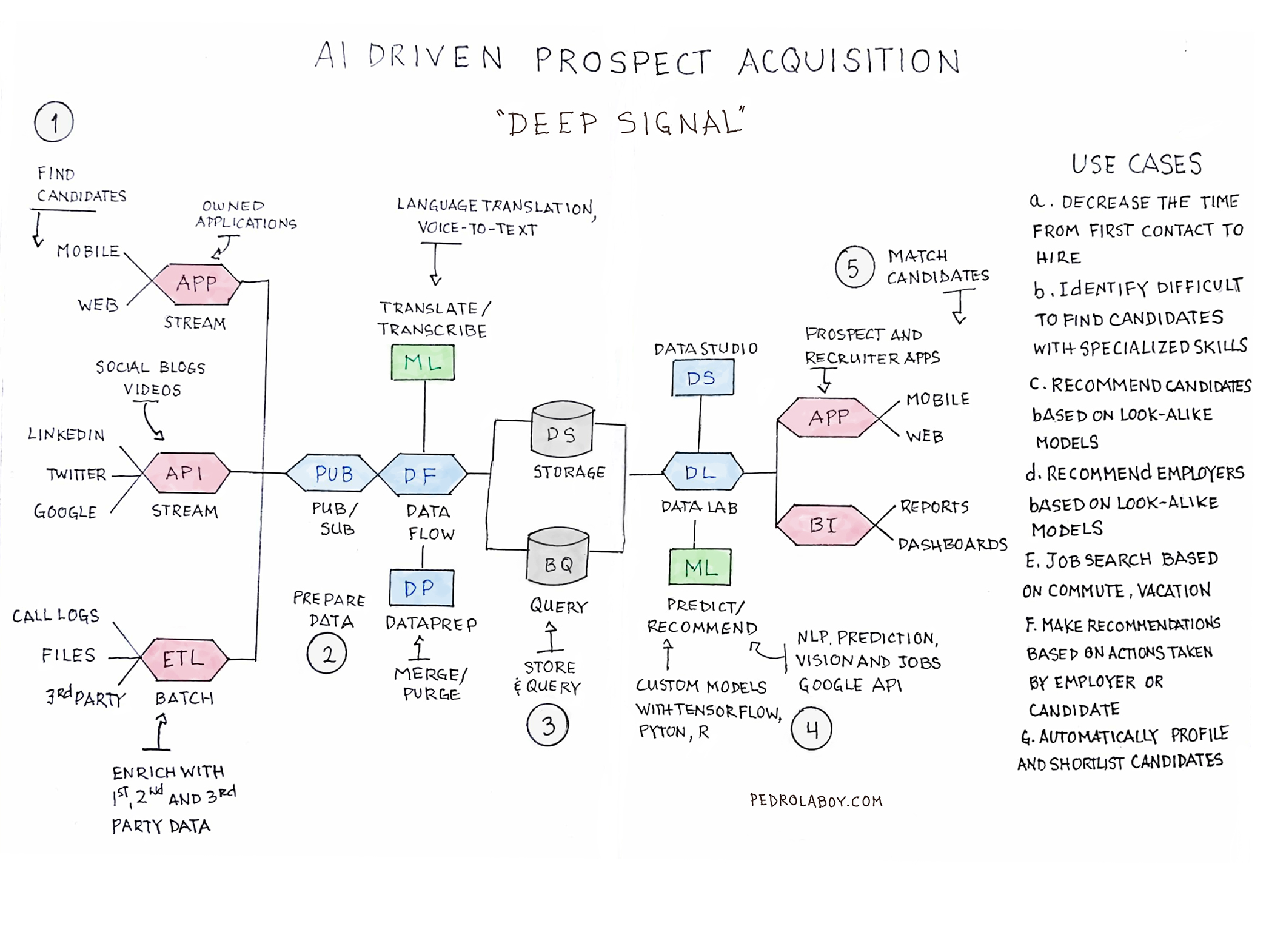

Machine learning is an evolving and complex science. If one takes into account all possible scenarios, dependencies and models, it would be impossible to sketch an all-encompassing explanation. So I will focus on what the non-data scientist should know about machine learning. That is, they should focus on understanding available data, machine learning types, algorithm models and business applications.

1. First, determine if what is the nature of the available data. Do you have historical campaign data with historical results or an unstructured database of customer records? Or are you trying to make real-time decisions on streaming data?

2. The data available–and use case–will determine the appropriate machine learning approach. There are three major types: supervised, unsupervised and reinforcement learning. a) Supervised Learning allows to you to predict an outcome based on input and output data (e.g. churn). b) Unsupervised Learning allows to you categorize outcomes based on input data (e.g. segmentation). c) Reinforcement Learning allows you to react to an environment (e.g. driverless car).

3. Each machine learning type will use a number of algorithm. There are hundreds of variations. Supervised Learning typically uses regression or classification algorithms. Unsupervised Learning uses, but is not limited to, clustering algorithms. Reinforcement Learning will usually use to type of neural network algorithm.

4. The uses of machine learning are nearly limitless. Although I listed here as the last step, determining the use case or business application should probably be the first step. For example, we would use logistic regression to determine whether a house would sell at a certain price or not and we would use linear regression to predict the future price of a house. You should keep in mind that machine learning business applications typically require the use of more than one machine learning type as well as multiple algorithms.

I hope this sketch serves as a useful guide. Feel free to share and use as needed.

Thoughts and comments as well as suggestions for other sketched explanations are welcomed.

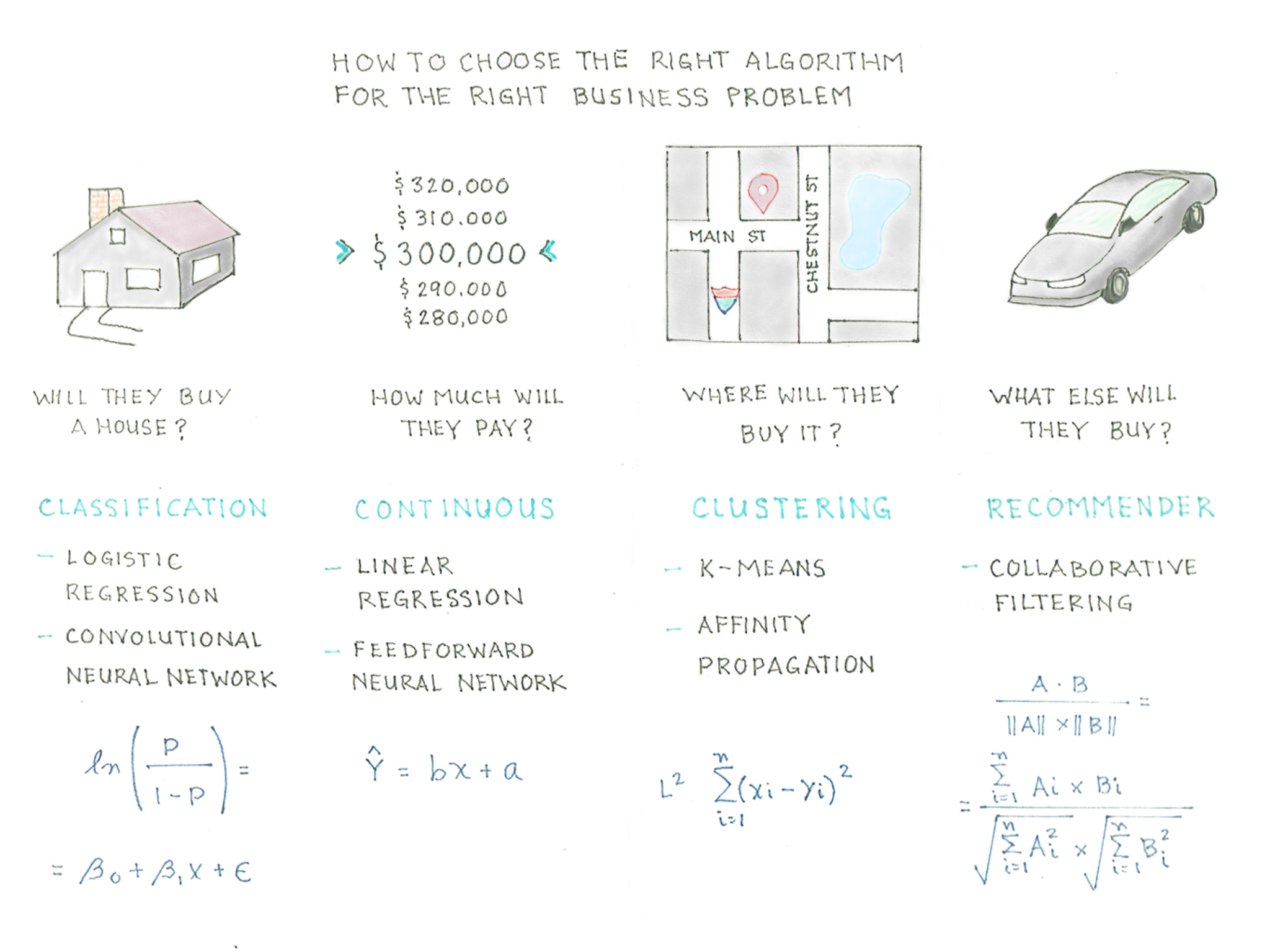

Notebook Thoughts: Choosing the Right AI Algorithm for the Right Problem

There are countless algorithms we can used to mathematically predict an outcome to a business challenge. However, the most widely used algorithms will fall into four categories: classification, continuous, clustering and recommendation.

Let’s use a real life example to illustrate how we choose the right algorithm to solve the right problem. For illustration purposes we are making a number of assumptions to keep things simple for the non-analyst.

Let’s say that a realtor is trying to answer the following questions:

- Will a couple buy a house? Here we are looking for a categorical answer of Yes or No. For this we would use some kind of Classification algorithm, which could include: Logistic Regression, Decision Trees or Convolutional Neural Network

- How much will they pay of the house? For this question we would use Continuous estimation as we trying to determine the value in a sequence. Is this case, one would likely use a Linear Regression algorithm.

- Where will the buy the house? Clustering would be the best approach to determine where they are likely to buy a house. K-means and Affinity

- If they buy a house, what else will they buy? Recommender System Algorithms are commonly used to determine next best offer or next best action. The most commonly used Recommender algorithm is Collaborative Filtering: either user-to-user or item-to-item.

Notebook Thoughts: The Four Phases of Digital Transformation

A new business paradigm is forming, one that aligns business intelligence, creativity, big data and technology to deliver relevant connected experiences to a new generation of hyper-connected consumers. To succeed in the coming years, companies will need to fuse together a myriad of technology platforms, segregated business data, and a somewhat ineffective media landscape to reach Millennial consumers and the generations that follow.

From crawling to flying, the journey to becoming a data driven organization that can deliver connected experiences has four distinct phases. Each of these transformational phases are enabled by five core drivers: data, technology, intelligence, content and experiences.

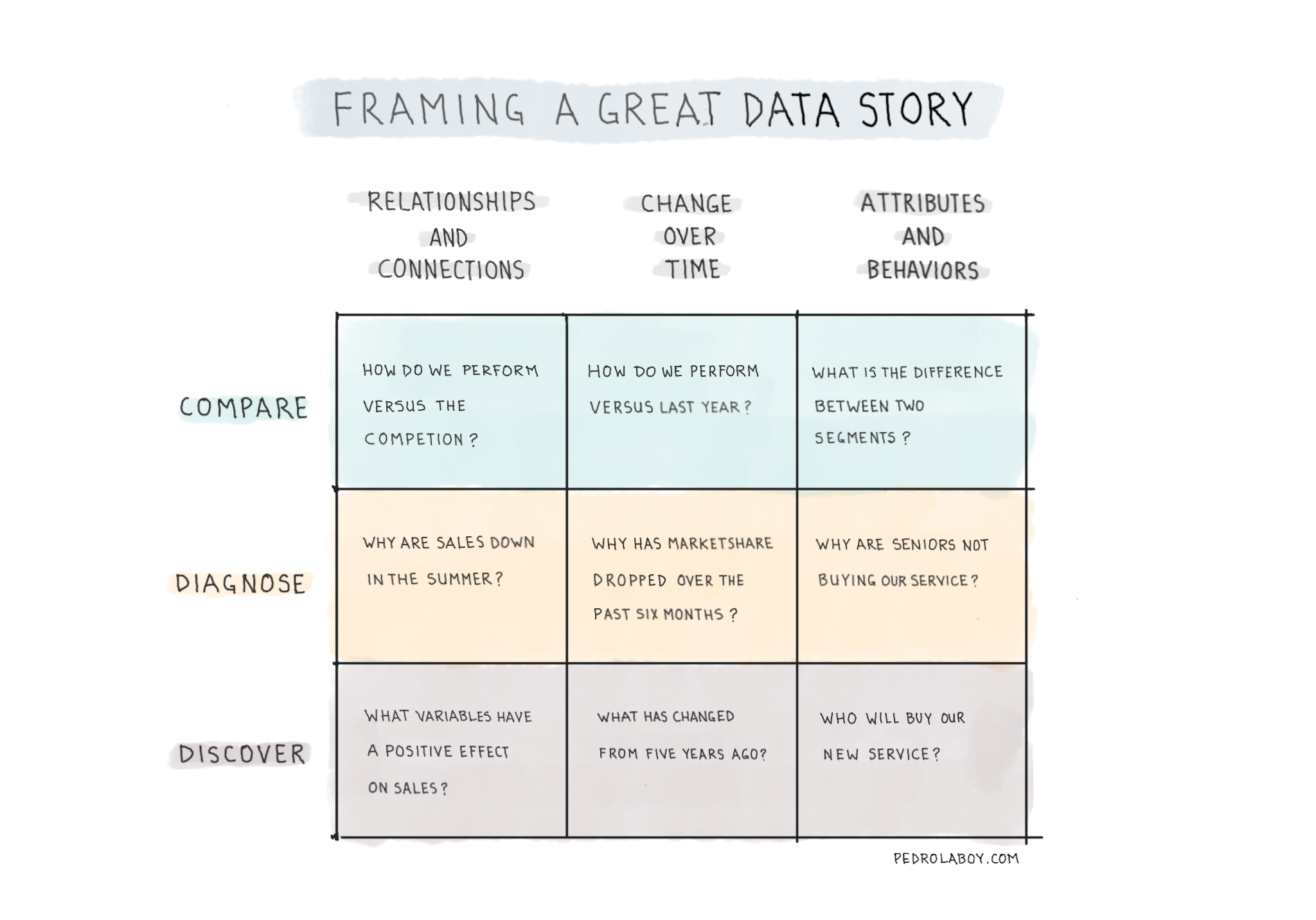

Notebook Thoughts: The Foundation of a Great Data Story

Telling a great data story begins with framing the what and the how of the questions we would like to answer. We can use our data to compare, diagnose and discover information or insights about relationships/connections, shifts over time and attributes/behaviors. However, the best answers usually are the product of a creative combination of approaches and datasets.

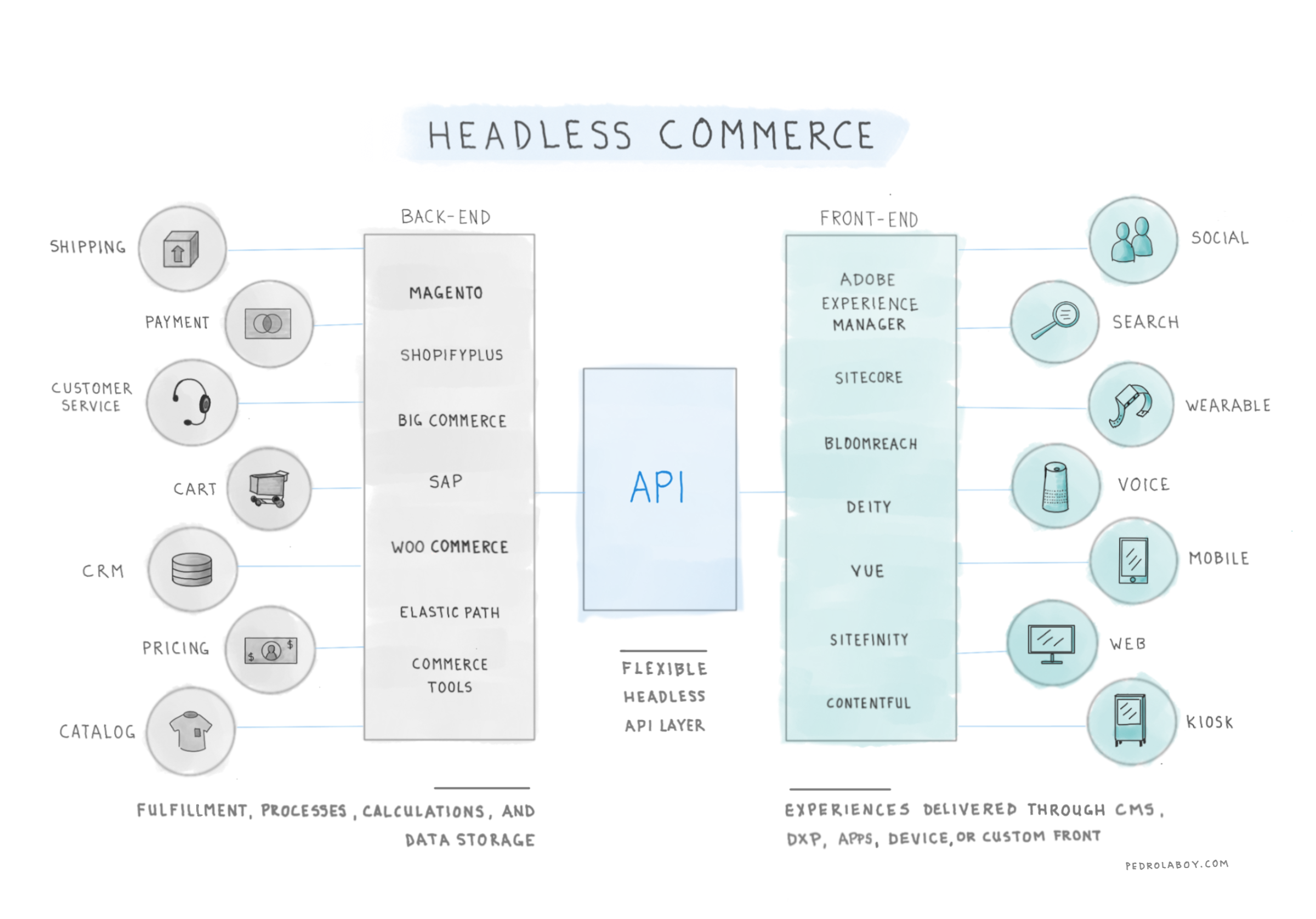

Notebook Thoughts: Understanding Headless Commerce

Headless commerce decouples back-end platforms responsible for fulfillment, operations, processes, calculations and data from front-ed platforms responsible for delivering experiences through technologies such as CMS (content management systems), DXPs (digital experience platforms), PWAs (progressive web applications) or custom solutions. One can say that the back-end is “headless” and front-end offers multiple “heads.” This is made possible through the use of a flexible API layer

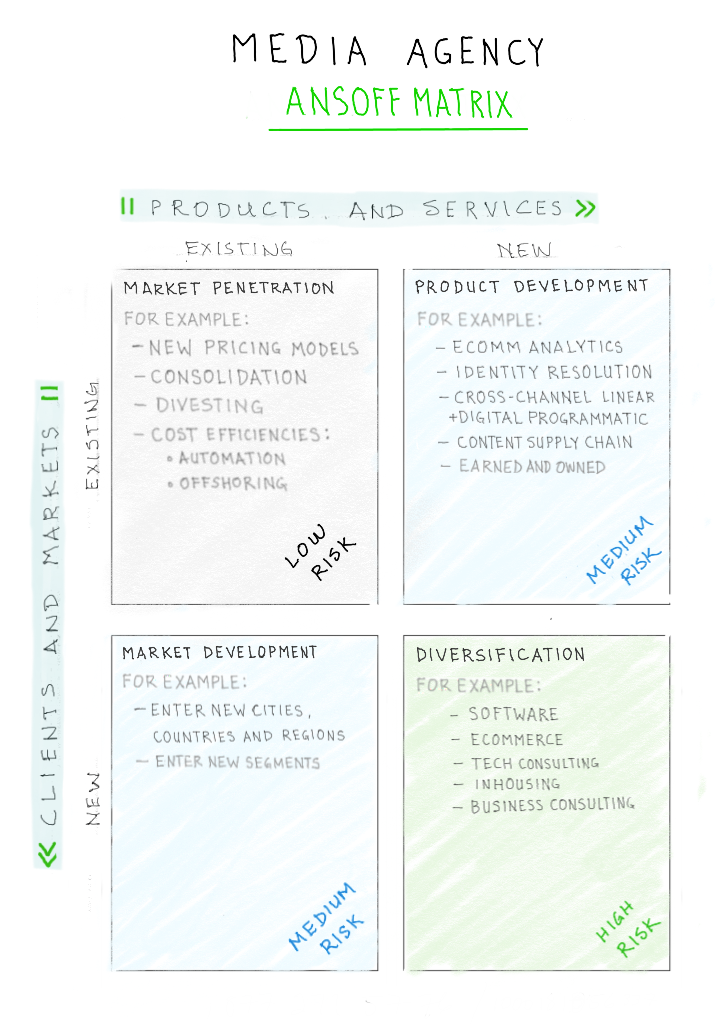

Notebook Thoughts: Media Agencies’ Ansoff Matrix

Recently, I was having a conversation with a friend about strategic choices and risks in our industry. The best way to map the two is to think of them in terms of the Ansoff Matrix. Ansoff argued that business growth can only come from two sources: new products and services or/and new clients and markets.

Market Penetration – One can grow existing clients or win new clients in your current market by offering a better value propositions. These could include new pricing models, efficiencies through consolidation or lower costs through off-shoring or automation. This is considered a low risk strategy.

Product Development – One can create new products and services that complement the existing suite. These could include ecommerce analytics, identity resolution, digital/linear cross-channel programmatic or new products around content creation and management. This is considered a medium risk strategy.

Market Development – This one is straight forward. Grow business my entering new markets with existing and/or new products and services. This is considered a medium risk strategy.

Diversification – One can develop brand new products and services that are outside the current areas of expertise. This could include tech consulting, software development, ecommerce management, or in-housing solutions (within expertise but can cannibalize existing services). This is considered a high risk strategy.

Notebook Thoughts: Using Social Media to Measure Brand Health

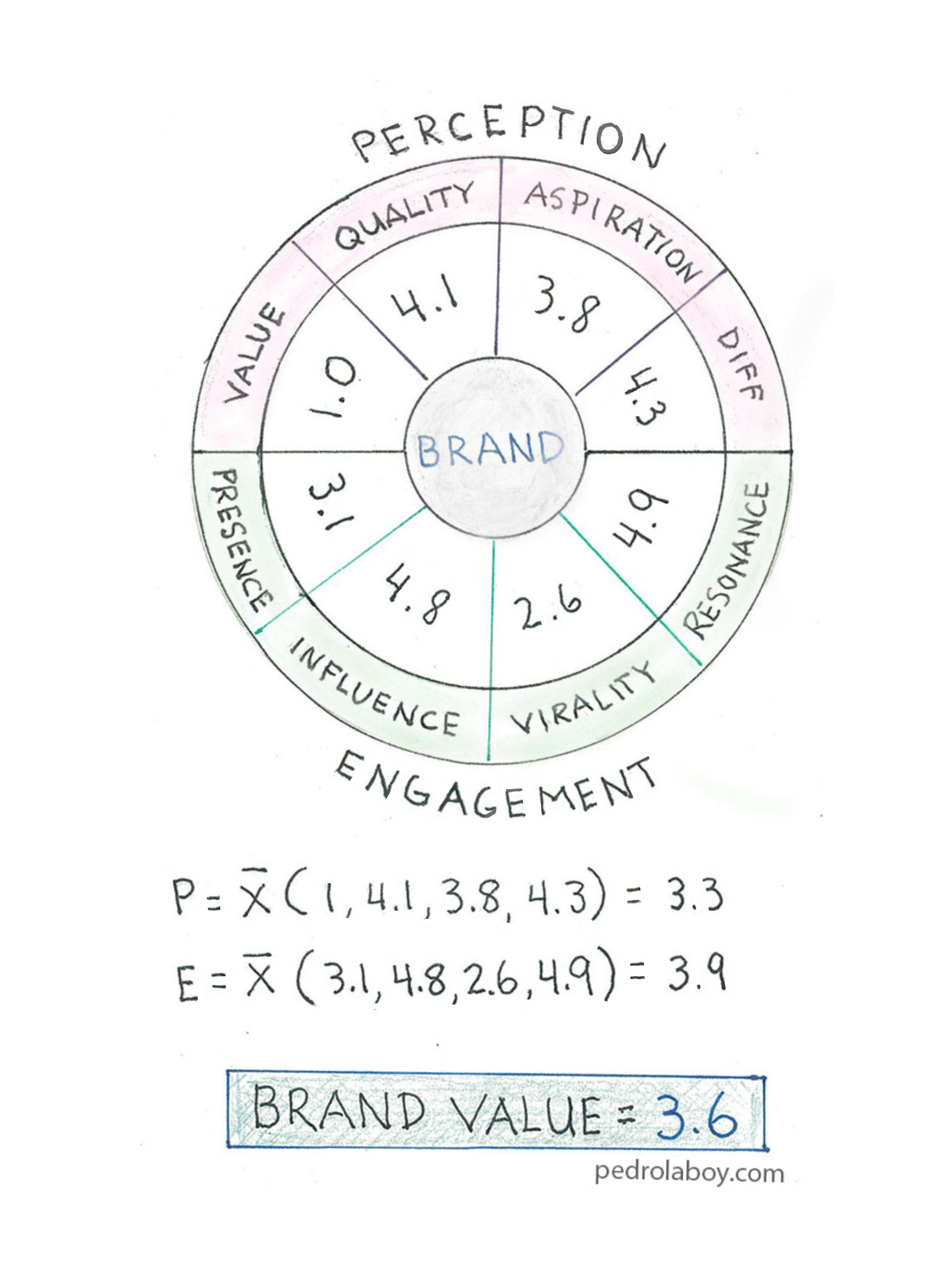

Rather than using sentiment as a proxy for brand health, we should embrace a new model that measures the health of brands in the context of the competitive set and category ecosystem. The model looks at two core areas; Perception and Engagement. On the Perception side we focus on key areas that define thoughts and feelings about the brand. The Engagement side quantifies the reach and strength of the brand and its messaging. All volumes are weighed against sentiment, to ensure that brands are not rewarded for negatively driven spikes in activity. Both Perception and Engagement consist of four distinct areas of measurement:

PERCEPTION

- Value: perception of the usefulness and benefit of a product compared to the price charged for it

- Quality: general level of satisfaction with the way a product works and its ability to work as intended

- Aspiration: expressing a longing or wish to own the product or to be associated with the product’s qualities

- Differentiation: the extend to which social media users draw distinctions between the qualities and characteristics of the brand and its competitors

ENGAGEMENT

- Presence: the size of a brand’s owned social communities weighed with the sentiment expressed by the community members toward the brand

- Influence: the ability of a brand to earn unaided mentions as well as have its messaging amplified and shared by the social media community

- Virality: the number of unique people engaged in conversations with or about the brand; weighed with the sentiment expressed by those users

- Resonance: the ability of a brand to engage users with its content and elicit reactions from them

Notebook Thoughts – Measuring the Customer Journey

There are many types of marketing measures that can be applied to a customer’s purchase and experience journey. However, what’s important is that these measures can both determine the effectiveness of specific marketing campaigns and predict the likelihood that a customer will move from one stage of the journey to the next.

Here is a sample of some of methodologies and/or measures that can be used in the different stages of the journey:

NEED

Market Analysis – Determine market needs

Experience Analysis – Determine prospective customer needs and/or opportunities to improve customer experience

RESEARCH

Awareness – Determine level of brand and product awareness

Segmentation – Segment customers to the smallest groupings feasible

Addressability – Level of addressability for each segment

SELECT

Media Mix Modeling – What are the optimal media channels we should use to reach our audiences

Multi Touch Attribution – How are channels performing for given audiences and campaigns

Brand Preference – What is the consumer brand preference within our category

Qualified Leads – Are we getting the desired level of marketing and sales qualified leads

PURCHASE

Propensity to Purchase – What is the likelihood that our audience will purchase our products

Cart Abandonment – Are prospective customers dropping off before completing a purchase

Conversation Rate – What is the conversation rate for our desired actions

Time to Purchase – What is the timeframe from first touch to purchase

Up-Sell / Cross-Sell – Are we able to up-sell and/or cross-sell to our customers

Share of Wallet – What is the share of wallet

Basket Size – How much are our customers spending per purchase

RECEIVE

Propensity to Return – Are our products being returned at unacceptable rates

EXPERIENCE

Sentiment Analysis – How do customers feel about our brands, products, services and delivered experiences

Customer Effort Score – What is the level of effort required by a customer to resolve an issue

Customer Satisfaction – What is the level of customer satisfaction

Respond and Resolution Time – how much time does it take to respond to and resolve an issue

RECOMMEND

Net Promoter Score – What are our customer’s willingness to recommend

Brand Mentions – Is our brand being mentioned positively and at the right level

Revenue Referrals – How much revenue are we generating from referrals

REPURCHASE

Repurchase Rate – Are our customers returning

Frequency – What is the time between purchases within a specific time period

Recency – How many days since the last purchase

Redemption Rate – What are the number of rewards that are being redeemed

Customer Lifetime Value – What is the estimated lifetime value of our targeted segments

OVER TO YOU!

As always, your thoughts and comments are welcomed. What else could we measure at each stage of the journey? What is your approach?